A new bombshell scoop from NBC News revealed an internal U.S. Border Patrol memo claiming that 30 percent of camera towers that compose the agency's "Remote Video Surveillance System" (RVSS) program are broken. According to the report, the memo describes "several technical problems" affecting approximately 150 towers.

Except, this isn't a bombshell. What should actually be shocking is that Congressional leaders are acting shocked, like those who recently sent a letter about the towers to Department of Homeland Security (DHS) Secretary Alejandro Mayorkas. These revelations simply reiterate what people who have been watching border technology have known for decades: Surveillance at the U.S.-Mexico border is a wasteful endeavor that is ill-equipped to respond to an ill-defined problem.

Yet, after years of bipartisan recognition that these programs were straight-up boondoggles, there seems to be a competition among political leaders to throw the most money at programs that continue to fail.

Official oversight reports about the failures, repeated breakages, and general ineffectiveness of these camera towers have been public since at least the mid-2000s. So why haven't border security agencies confronted the problem in the last 25 years? One reason is that these cameras are largely political theater; the technology dazzles publicly, then fizzles quietly. Meanwhile, communities that should be thriving at the border are treated like a laboratory for tech companies looking to cash in on often exaggerated—if not fabricated—homeland security threats.

The Acronym Game

EFF is mapping surveillance at the U.S.-Mexico border

In fact, the history of camera towers at the border is an ugly cycle. First, Border Patrol introduces a surveillance program with a catchy name and big promises. Then a few years later, oversight bodies, including Congress, conclude it's an abject mess. But rather than abandon the program once and for all, border security officials come up with a new name, slap on a fresh coat of paint, and continue on. A few years later, history repeats.

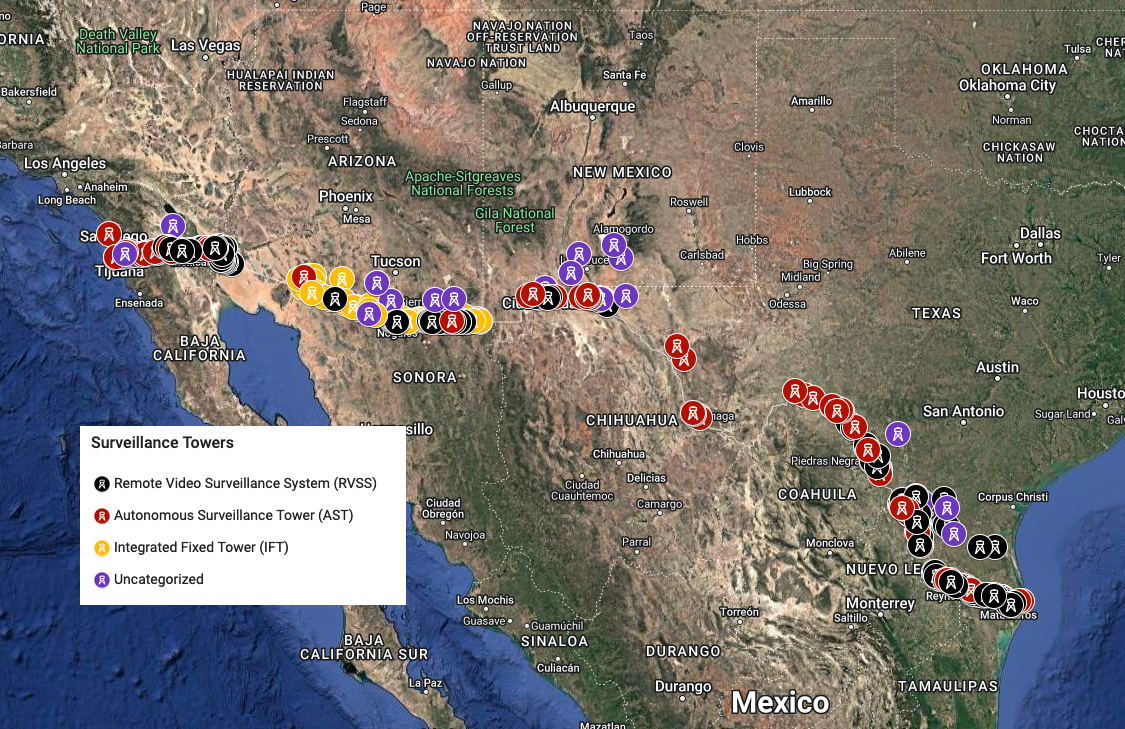

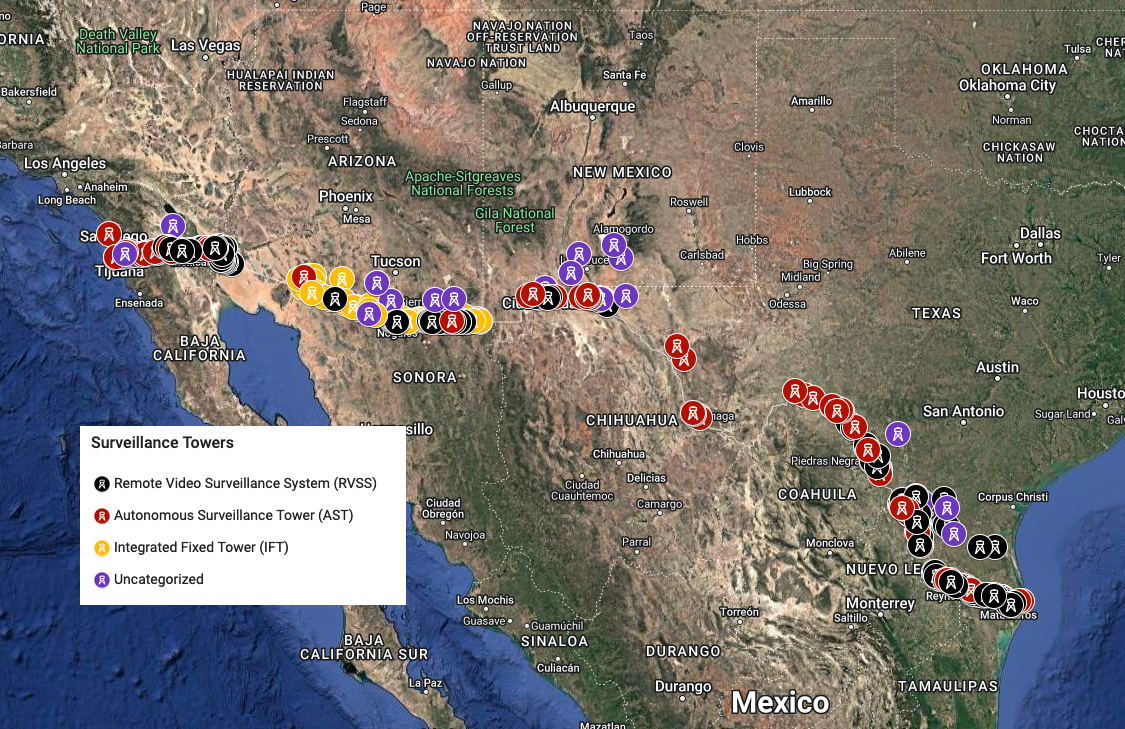

In the early 2000s, there was the Integrated Surveillance Intelligence System (ISIS), with the installation of RVSS towers in places like Calexico, California and Nogales, Arizona, which was later became the America's Shield Initiative (ASI). After those failures, there was Project 28 (P-28), the first stage of the Secure Border Initiative (SBInet). When that program was canceled, there were various new programs like the Arizona Border Surveillance Technology Plan, which became the Southwest Border Technology Plan. Border Patrol introduced the Integrated Fixed Tower (IFT) program and the RVSS Update program, then the Automated Surveillance Tower (AST) program. And now we've got a whole slew of new acronyms, including the Integrated Surveillance Tower (IST) program and the Consolidated Towers and Surveillance Equipment (CTSE) program.

Feeling overwhelmed by acronyms? Welcome to the shell game of border surveillance. Here's what happens whenever oversight bodies take a closer look.

ISIS and ASI

An RVSS from the early 2000s in Calexico, California.

Let's start with the Integrated Surveillance Intelligence System (ISIS), a program comprised of towers, sensors and databases originally launched in 1997 by the Immigration and Naturalization Service. A few years later, INS was reorganized into the U.S. Department of Homeland Security (DHS), and ISIS became part of the newly formed Customs & Border Protection (CBP).

It was only a matter of years before the DHS Inspector General concluded that ISIS was a flop: "ISIS remote surveillance technology yielded few apprehensions as a percentage of detection, resulted in needless investigations of legitimate activity, and consumed valuable staff time to perform video analysis or investigate sensor alerts."

During Senate hearings, Sen. Judd Gregg (R-NH), complained about a "total breakdown in the camera structures," and that the U.S. government "bought cameras that didn't work."

Around 2004, ISIS was folded into the new America's Shield Initiative (ASI), which officials claimed would fix those problems. CBP Commissioner Robert Bonner even promoted ASI as a "critical part of CBP’s strategy to build smarter borders." Yet, less than a year later, Bonner stepped down, and the Government Accountability Office (GAO) found the ASI had numerous unresolved issues necessitating a total reevaluation. CBP disputed none of the findings and explained it was dismantling ASI in order to move onto something new that would solve everything: the Secure Border Initiative (SBI).

Reflecting on the ISIS/ASI programs in 2008, Rep. Mike Rogers (R-MI) said, "What we found was a camera and sensor system that was plagued by mismanagement, operational problems, and financial waste. At that time, we put the Department on notice that mistakes of the past should not be repeated in SBInet."

You can guess what happened next.

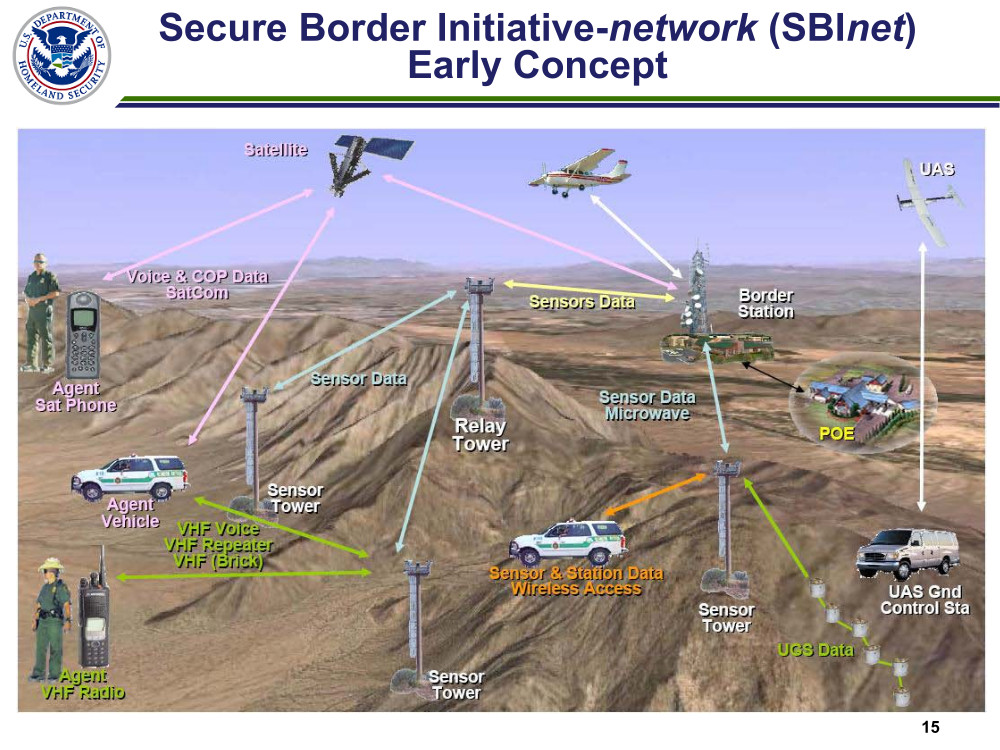

P-28 and SBInet

The subsequent iteration was called Project 28, which then evolved into the Secure Border Initiative's SBInet, starting in the Arizona desert.

In 2010, the DHS Chief Information Officer summarized its comprehensive review: "'Project 28,' the initial prototype for the SBInet system, did not perform as planned. Project 28 was not scalable to meet the mission requirements for a national comment [sic] and control system, and experienced significant technical difficulties."

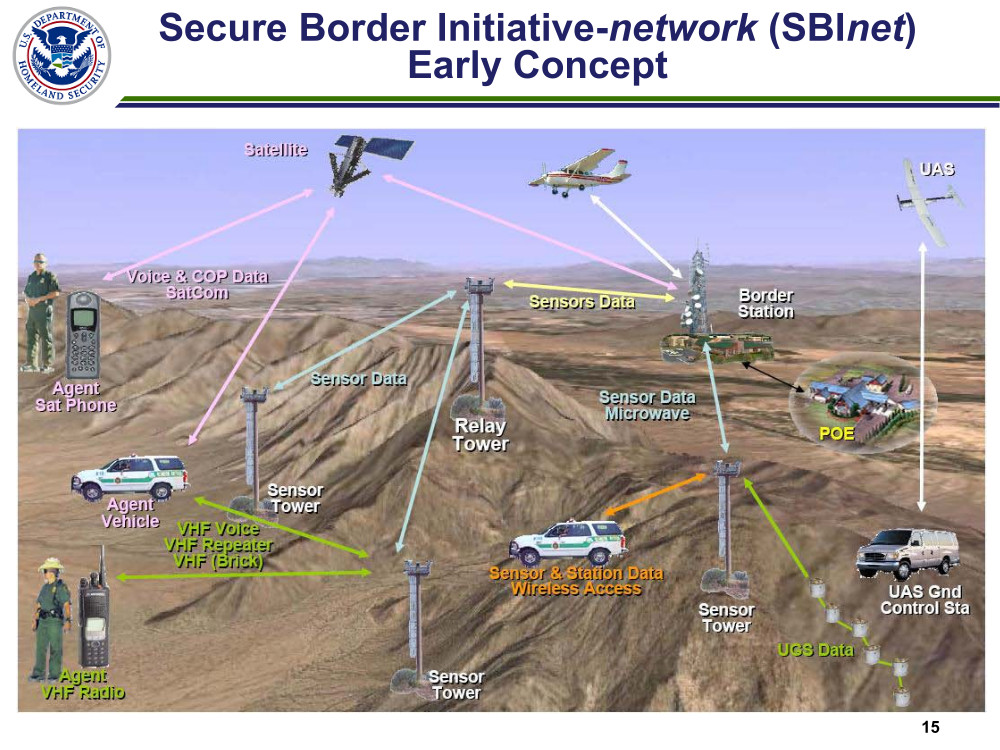

A DHS graphic illustrating the SBInet concept

Meanwhile, bipartisan consensus had emerged about the failure of the program, due to the technical problems as well as contracting irregularities and cost overruns.

As Rep. Christopher Carney (D-PA) said in his prepared statement during Congressional hearings:

P–28 and the larger SBInet program are supposed to be a model of how the Federal Government is leveraging technology to secure our borders, but Project 28, in my mind, has achieved a dubious distinction as a trifecta of bad Government contracting: Poor contract management; poor contractor performance; and a poor final product.

Rep. Rogers' remarks were even more cutting: "You know the history of ISIS and what a disaster that was, and we had hoped to take the lessons from that and do better on this and, apparently, we haven’t done much better. "

Perhaps most damning of all was yet another GAO report that found, "SBInet defects have been found, with the number of new defects identified generally increasing faster than the number being fixed—a trend that is not indicative of a system that is maturing."

In January 2011, DHS Secretary Janet Napolitano canceled the $3-billion program.

IFTs, RVSSs, and ASTs

Following the termination of SBInet, the Christian Science Monitor ran the naive headline, "US cancels 'virtual fence' along Mexican border. What's Plan B?" Three years later, the newspaper answered its own question with another question, "'Virtual' border fence idea revived. Another 'billion dollar boondoggle'?"

Boeing was the main contractor blamed for SBINet's failure, but Border Patrol ultimately awarded one of the biggest new contracts to Elbit Systems, which had been one of Boeing's subcontractors on SBInet. Elbit began installing IFTs (again, that stands for "Integrated Fixed Towers") in many of the exact same places slated for SBInet. In some cases, the equipment was simply swapped on an existing SBInet tower.

Meanwhile, another contractor, General Dynamics Information Technology, began installing new RVSS towers and upgrading old ones as part of the RVSS-U program. Border Patrol also started installing hundreds of "Autonomous Surveillance Towers" (ASTs) by yet another vendor, Anduril Industries, embracing the new buzz of artificial intelligence.

An Autonomous Surveillance Tower and an RVSS tower along the Rio Grande.

In 2017, the GAO complained the Border Patrol's poor data quality made the agency "limited in its ability to determine the mission benefits of its surveillance technologies." In one case, Border Patrol stations in the Rio Grande Valley claimed IFTs assisted in 500 cases in just six months. The problem with that assertion was there are no IFTs in Texas or, in fact, anywhere outside Arizona.

A few years later, the DHS Inspector General issued yet another report indicating not much had improved:

CBP faced additional challenges that reduced the effectiveness of its existing technology. Border Patrol officials stated they had inadequate personnel to fully leverage surveillance technology or maintain current information technology systems and infrastructure on site. Further, we identified security vulnerabilities on some CBP servers and workstations not in compliance due to disagreement about the timeline for implementing DHS configuration management requirements.

CBP is not well-equipped to assess its technology effectiveness to respond to these deficiencies. CBP has been aware of this challenge since at least 2017 but lacks a standard process and accurate data to overcome it.

Overall, these deficiencies have limited CBP’s ability to detect and prevent the illegal entry of noncitizens who may pose threats to national security.

Around that same time, the RAND Corporation published a study funded by DHS that found "strong evidence" the IFT program was having no impact on apprehension levels at the border, and only "weak" and "inconclusive" evidence that the RVSS towers were having any effect on apprehensions.

And yet, border authorities and their supporters in Congress are continuing to promote unproven, AI-driven technologies as the latest remedy for years of failures, including the ones voiced in the memo obtained by NBC News. These systems involve cameras controlled by algorithms that automatically identify and track objects or people of interest. But in an age when algorithmic errors and bias are being identified nearly everyday in every sector including law enforcement, it is unclear how this technology has earned the trust of the government.

History Keeps Repeating

That brings us today, with reportedly 150 or more towers out of service. So why does Washington keep supporting surveillance at the border? Why are they proposing record-level funding for a system that seems irreparable? Why have they abandoned their duty to scrutinize federal programs?

Well, one reason may be that treating problems at the border as humanitarian crises or pursuing foreign policy or immigration reform measures isn't as politically useful as promoting a phantom "invasion" that requires a military-style response. Another reason may be that tech companies and defense contractors wield immense amounts of influence and stand to make millions, if not billions, profiting off border surveillance. The price is paid by taxpayers, but also in the civil liberties of border communities and the human rights of asylum seekers and migrants.

But perhaps the biggest reason this history keeps repeating itself is that no one is ever really held accountable for wasting potentially billions of dollars on high-tech snake oil.