EFF’s Reflections from RightsCon 2025

EFF was delighted to once again attend RightsCon—this year hosted in Taipei, Taiwan between 24-27 February. As with previous years, RightsCon provided an invaluable opportunity for human rights experts, technologists, activists, and government representatives to discuss pressing human rights challenges and their potential solutions.

For some attending from EFF, this was the first RightsCon. For others, their 10th or 11th. But for all, one message was spoken loud and clear: the need to collectivize digital rights in the face of growing authoritarian governments and leaders occupying positions of power around the globe, as well as Big Tech’s creation and provision of consumer technologies for use in rights-abusing ways.

EFF hosted a multitude of sessions, and appeared on many more panels—from a global perspective on platform accountability frameworks, to the perverse gears supporting transnational repression, and exploring tech tools for queer liberation online. Here we share some of our highlights.

Major Concerns Around Funding Cuts to Civil Society

Two major shifts affecting the digital rights space underlined the renewed need for solidarity and collective responses. First, the Trump administration’s summary (and largely illegal) funding cuts for the global digital rights movement from USAID, the State Department, the National Endowment for Democracy and other programs, are impacting many digital rights organizations across the globe and deeply harming the field. By some estimates, U.S. government cuts, along with major changes in the Netherlands and elsewhere, will result in a 30% reduction in the size of the global digital rights community, especially in global majority countries.

Second, the Trump administration’s announcement to respond to the regulation of U.S. tech companies with tariffs has thrown another wrench into the work of many of us working towards improved tech accountability.

We know that attacks on civil society, especially on funding, are a go-to strategy for authoritarian rulers, so this is deeply troubling. Even in more democratic settings, this reinforces the shrinking of civic space hindering our collective ability to organize and fight for better futures. Given the size of the cuts, it’s clear that other funders will struggle to counterbalance the dwindling U.S. public funding, but they must try. We urge other countries and regions, as well as individuals and a broader range of philanthropy, to step up to ensure that the crucial work defending human rights online will be able to continue.

Community Solidarity with Alaa Abd El-Fattah and Laila Soueif

The call to free Alaa Abd El-Fattah from illegal detention in Egypt was a prominent message heard throughout RightsCon. During the opening ceremony, Access Now’s new Executive Director, Alejandro Mayoral, talked about Alaa’s keynote speech at the very first RightsCon and stated: “We stand in solidarity with him and all civil society actors, activists, and journalists whose governments are silencing them.” The opening ceremony also included a video address from Alaa’s mother, Laila Soueif, in which she urged viewers to “not let our defeat be permanent.” Sadly, immediately after that address Ms. Soueif was admitted to the hospital as a result of her longstanding hunger strike in support of her son.

The calls to #FreeAlaa and save Laila were again reaffirmed during the closing ceremony in a keynote by Sara Alsherif, Migrant Digital Justice Programme Manager at UK-based digital rights group Open Rights Group and close friend of Alaa. Referencing Alaa’s early work as a digital activist, Alsherif said: “He understood that the fight for digital rights is at the core of the struggle for human rights and democracy.” She closed by reminding the hundreds-strong audience that “Alaa could be any one of us … Please do for him what you would want us to do for you if you were in his position.”

EFF and Open Rights Group also hosted a session talking about Alaa, his work as a blogger, coder, and activist for more than two decades. The session included a reading from Alaa’s book and a discussion with participants on strategies.

Platform Accountability in Crisis

Online platforms like Facebook and services like Google are crucial spaces for civic discourse and access to information. Many sessions at RightsCon were dedicated to the growing concern that these platforms have also become powerful tools for political manipulation, censorship, and control. With the return of the Trump administration, Facebook’s shift in hate speech policies, and the growing geo-politicization of digital governance, many now consider platform accountability being in crisis.

A dedicated “Day 0” event, co-organized by Access Now and EFF, set the stage of these discussions with a high-level panel reflecting on alarming developments in platform content policies and enforcement. Reflecting on Access Now’s “rule of law checklist,” speakers stressed how a small group of powerful individuals increasingly dictate how platforms operate, raising concerns about democratic resilience and accountability. They also highlighted the need for deeper collaboration with global majority countries on digital governance, taking into account diverse regional challenges. Beyond regulation, the conversation discussed the potential of user-empowered alternatives, such as decentralized services, to counter platform dominance and offer more sustainable governance models.

A key point of attention was the EU’s Digital Services Act (DSA), a rulebook with the potential to shape global responses to platform accountability but one that also leaves many crucial questions open. The conversation naturally transitioned to the workshop organized by the DSA Human Rights Alliance, which focused more specifically on the global implications of DSA enforcement and how principles for a “Human Rights-Centered Application of the DSA” could foster public interest and collaboration.

Fighting Internet Shutdowns and Anti-Censorship Tools

Many sessions discussed internet shutdowns and other forms of internet blocking impacted the daily lives of people under extremely oppressive regimes. The overwhelming conclusion was that we need encryption to remain strong in countries with better conditions of democracy in order to continue to bridge access to services in places where democracy is weak. Breaking encryption or blocking important tools for “national security,” elections, exams, protests, or for law enforcement only endangers freedom of information for those with less political power. In turn, these actions empower governments to take possibly inhumane actions while the “lights are out” and people can’t tell the rest of the world what is happening to them.

Another pertinent point coming out of RightsCon was that anti-censorship tools work best when everyone is using them. Diversity of users not only helps to create bridges for others who can’t access the internet through normal means, but it also helps to create traffic that looks innocuous enough to bypass censorship blockers. Discussions highlighted how the more tools we have to connect people without unique traffic, the less chances there are for government censorship technology to keep their traffic from going through. We know some governments are not above completely shutting down internet access. But in cases where they still allow the internet, user diversity is key. It also helps to move away from narratives that imply “only criminals” use encryption. Encryption is for everyone, and everyone should use it. Because tomorrow’s internet could be tested by future threats.

Palestine: Human Rights in Times of Conflict

At this years RightsCon, Palestinian non-profit organization 7amleh, in collaboration with the Palestinian Digital Rights Coalition and supported by dozens of international organizations including EFF, launched #ReconnectGaza, a global campaign to rebuild Gaza’s telecommunications network and safeguard the right to communication as a fundamental human right. The campaign comes on the back of more than 17 months of internet blackouts and destruction to Gaza’s telecommunications infrastructure by the Israeli authorities. Estimates indicate that 75% of Gaza’s telecommunications infrastructure has been damaged, with 50% completely destroyed. This loss of connectivity has crippled essential services—preventing healthcare coordination, disrupting education, and isolating Palestinians from the digital economy.

On another panel, EFF raised concerns to Microsoft representatives about an AP report that emerged just prior to Rightscon about the company providing services to the Israeli Defense Forces that are being used as part of the repression of Palestinians in Gaza as well as in the bombings in Lebanon. We noted that Microsoft’s pledges to support human rights seemed to be in conflict with this, something EFF has already raised about Google and Amazon and their work on Project Nimbus. Microsoft promised to look into that allegation, as well as one about its provision of services to Saudi Arabia.

In the RightsCon opening ceremony, Alejandro Mayoral noted that: “Today, the world’s eyes are on Gaza, where genocide has taken place, AI is being weaponized, and people’s voices are silenced as the first phase of the fragile Palestinian-Israeli ceasefire is realized.” He followed up by saying, “We are surrounded by conflict. Palestine, Sudan, Myanmar, Ukraine, and beyond…where the internet and technology are being used and abused at the cost of human lives.” Following this keynote, Access Now’s MENA Policy and Advocacy Director, Marwa Fatafta, hosted a roundtable to discuss technology in times of conflict, where takeaways included the reminder that “there is no greater microcosm of the world’s digital rights violations happening in our world today than in Gaza. It’s a laboratory where the most invasive and deadly technologies are being tested and deployed on a besieged population.”

Countering Cross-Border Arbitrary Surveillance and Transnational Repression

Concerns about ongoing legal instruments that can be misused to expand transnational repression were also front-and-center at RightsCon. During a Citizen Lab-hosted session we participated in, participants examined how cross-border policing can become a tool to criminalize marginalized groups, the economic incentives driving these criminalization trends, and the urgent need for robust, concrete, and enforceable international human rights safeguards. They also noted that the newly approved UN Cybercrime Convention, with only minimal protections, adds yet another mechanism for broadening cross-border surveillance powers, thereby compounding the proliferation of legal frameworks that lack adequate guardrails against misuse.

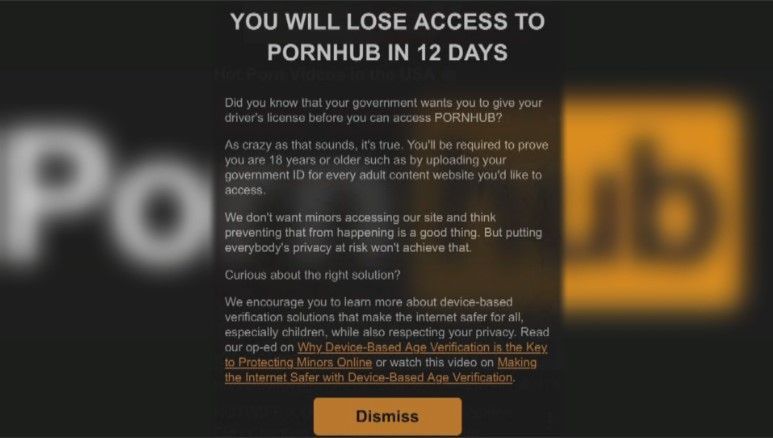

Age-Gating the Internet

EFF co-hosted a roundtable session to workshop a human rights statement addressing government mandates to restrict young people’s access to online services and specific legal online speech. Participants in the roundtable represented five continents and included representatives from civil society and academia, some of whom focused on digital rights and some on childrens’ rights. Many of the participants will continue to refine the statement in the coming months.

Hard Conversations

EFF participated in a cybersecurity conversation with representatives of the UK government, where we raised serious concerns about the government’s hostility to strong encryption, and the resulting insecurity they had created for both UK citizens and the people who communicate with them by pressuring Apple to ensure UK law enforcement access to all communications.

Equity and Inclusion in Platform Discussions, Policies, and Trust & Safety

The platform economy is an evergreen RightsCon topic, and this year was no different, with conversations ranging from the impact of content moderation on free expression to transparency in monetization policies, and much in between. Given the recent developments at Meta, X, and elsewhere, many participants were rightfully eager to engage.

EFF co-organized an informal meetup of global content moderation experts with whom we regularly convene, and participated in a number of sessions, such as on the decline of user agency on platforms in the face of growing centralized services, as well as ways to expand choice through decentralized services and platforms. One notable session on this topic was hosted by the Center for Democracy and Technology on addressing global inequities in content moderation, in which speakers presented findings from their research on the moderation by various platforms of content in Maghrebi Arabic and Kiswahili, as well as a forthcoming paper on Quechua.

Reflections and Next Steps

RightsCon is a conference that reminds us of the size and scope of the digital rights movement around the world. Holding it in Taiwan and in the wake of the huge cuts to funding for so many created an urgency that was palpable across the spectrum of sessions and events. We know that we’ve built a robust community and that can weather the storms, and in the face of overwhelming pressure from government and corporate actors, it's essential that we resist the temptation to isolate in the face of threats and challenges but instead continue to push forward with collectivisation and collaboration to continue speaking truth to power, from the U.S. to Germany, and across the globe.